Breaking: mssql-python Now Streams SQL Server Data Directly via Apache Arrow, Slashing Overhead for Python Data Libraries

Breaking: mssql-python Now Streams SQL Server Data Directly via Apache Arrow, Slashing Overhead for Python Data Libraries

February 23, 2025 – The mssql-python driver has just gained native Apache Arrow support, enabling zero-copy data ingestion from SQL Server into Polars, Pandas, DuckDB, and other Arrow-native tools. Community contributor Felix Graßl (@ffelixg) delivered the feature, which eliminates the traditional bottleneck of per-row Python object creation.

“Fetching a million rows used to mean a million Python objects, a million garbage collector allocations, and then discarding it all to build a DataFrame,” said Graßl. “Now mssql-python writes directly into Arrow buffers. The DataFrame library gets a pointer and works immediately—no serialization, no copies.”

What Changed?

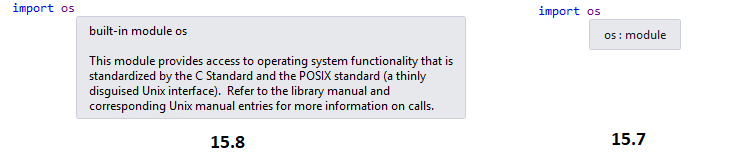

Previously, mssql-python had to convert each SQL Server row into Python objects (ints, strings, datetimes) before they could be assembled into a DataFrame. With Arrow support, the entire fetch loop runs in C++ and populates contiguous typed arrays. Nulls are represented as a compact bitmap rather than per-cell Python None objects.

For temporal types like DATETIME and DATETIMEOFFSET, Python-side per-value conversions are eliminated entirely, which should speed up those columns significantly. The driver now implements the Arrow C Data Interface, a cross-language ABI that lets languages exchange shared memory without marshaling.

Background: How Apache Arrow Works

Apache Arrow is an open standard for columnar in-memory data, designed for zero-copy language interoperability. Its C Data Interface (a stable ABI) allows programs written in different languages to share memory by passing a pointer—no serialization, no copying, no re-parsing.

Arrow stores each column contiguously in typed buffers. A column of one million integers is a single C array, not a million Python objects. Nulls are recorded in a bitmap, saving memory on missing values.

For a database driver, this means the entire fetch loop can be implemented in C++ and write directly into Arrow buffers. The consuming library (e.g., Polars) receives a pointer and can begin filtering, joining, or aggregating in-place without materializing Python objects at any stage.

Immediate Benefits

- Speed: Columnar fetch avoids per-row Python overhead, especially for datetime and decimal types that previously required costly conversions.

- Lower memory usage: A million integers live in a single C array, not a million Python objects—each with its own 28-byte header.

- Seamless interoperability: Data flows directly into Polars, Pandas (via

ArrowDtype), DuckDB, and Hugging Face datasets without intermediate copies.

What This Means

Data engineers and scientists working with SQL Server will see dramatically faster ETL pipelines. The elimination of Python object creation and garbage collection pressure means larger datasets can be processed in memory without slowdown. “We’re removing the impedance mismatch between the database and the analytical library,” explained Graßl. “Every downstream operation—filter, join, aggregation—works in-place on the same Arrow buffers.”

This also complements projects like DuckDB, which can now directly read from mssql-python’s Arrow tables without any conversion step. For Polars users, query execution becomes near-zero overhead.

Next Steps

The new arrow-fetch feature is available in mssql-python starting from version 2.5.0. Users need only set the fetch_arrow=True parameter in their connection cursor or query execution call.

For detailed configuration, see the official Apache Arrow documentation or the mssql-python GitHub repo.

Related Articles

- Accelerate SQL Server Data Pipelines: Direct Arrow Support in mssql-python

- Meta’s NeuralBench: A Unified Benchmark for EEG-Based NeuroAI Models

- Harnessing Apache Arrow for Faster Python Analytics with SQL Server

- Novel Scanpy-Based Pipeline Revolutionizes Single-Cell RNA-Seq Analysis of Immune Cells

- ConferencePulse: Building a Real-Time AI Conference Assistant with .NET's Composable Stack

- 10 Essential Steps to Build an Efficient Knowledge Base for AI Models

- Breaking: SQL Server Python Driver Now Supports Apache Arrow for Zero-Copy Data Transfer

- Ensuring Consistency and Reliability in Scoring Models: A Python Guide to Monotonicity and Stability Checks