How to Use Apache Arrow for Lightning-Fast Data Fetching from SQL Server with mssql-python

Introduction

Fetching millions of rows from SQL Server used to be a bottleneck: each row became a Python object, triggering garbage collector overhead and memory bloat. With the latest mssql-python driver, you can now retrieve data directly as Apache Arrow structures—a zero-copy, columnar format that bypasses Python object creation entirely. This feature, contributed by community developer Felix Graßl (@ffelixg), integrates seamlessly with Polars, Pandas (via ArrowDtype), DuckDB, and other Arrow-native tools. In this guide, you'll learn how to set up and use this high-performance fetch path, step by step.

What You Need

- Python 3.8 or later installed on your system.

- mssql-python driver with Arrow support (version 1.2.0 or higher recommended).

- A SQL Server instance (on-premises, Azure SQL Database, or SQL Server on Linux) with connection credentials.

- An Arrow-native library of your choice: Polars, Pandas (with pyarrow backend), DuckDB, or others.

- A Python environment manager (e.g.,

venvorconda) to keep dependencies isolated.

Step-by-Step Guide

Step 1: Install the Required Packages

Open your terminal and install the latest mssql-python driver and your preferred Arrow-native library. For this guide, we'll use Polars as an example.

pip install mssql-python polars

If you plan to use Pandas with Arrow support, also install pyarrow:

pip install pandas pyarrow

For DuckDB:

pip install duckdb mssql-python

Step 2: Establish a Connection to SQL Server

Create a connection object using mssql.connect() with your server details. The connection handles authentication and session state—same as the non-Arrow path.

import mssql

conn = mssql.connect(

host='your_server.database.windows.net',

database='your_database',

user='your_username',

password='your_password',

port=1433

)

Note: Use an environment variable or a secrets manager for credentials in production.

Step 3: Execute a Query with Arrow Output

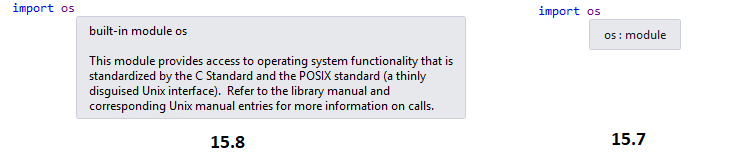

The magic happens in the cursor.execute() method. Set the arrow=True parameter to instruct the driver to fetch results as Apache Arrow structures.

with conn.cursor() as cursor:

cursor.execute('SELECT * FROM your_table', arrow=True)

result = cursor.fetchall() # returns an Arrow Table (pyarrow.lib.Table)

Behind the scenes, the entire fetch loop runs in C++, writing column values directly into Arrow buffers. No Python objects are created per row—only the final Arrow Table object materializes in Python. This dramatically reduces garbage-collector pressure and speeds up data retrieval, especially for temporal types like DATETIME and DATETIMEOFFSET.

Step 4: Convert Arrow Table to Your DataFrame

The returned object is a PyArrow Table. Convert it to your preferred DataFrame format with zero additional copies.

- Polars:

df = pl.from_arrow(result) - Pandas (with ArrowDtype):

df = result.to_pandas(types_mapper=pd.ArrowDtype) - DuckDB:

duckdb.sql('SELECT * FROM result')(DuckDB can read Arrow tables natively)

For example, with Polars:

import polars as pl

df = pl.from_arrow(result)

print(df)

The DataFrame library receives a pointer to the same memory—no serialization, no copies. Subsequent operations like filters, joins, and aggregations also work in-place on those Arrow buffers, keeping your pipeline efficient.

Tips for Optimal Use

- Prefer columnar fetch for large result sets. The Arrow path excels when fetching thousands or millions of rows. For tiny result sets, the overhead of building Arrow buffers may outweigh benefits—stick with the default row-based fetch.

- Monitor memory with nullable columns. Arrow uses a compact bitmap for nulls instead of per-cell

Noneobjects, but still allocates a fixed-size buffer per column. For highly sparse data, consider filtering early in the SQL query. - Combine with predicates. To minimize data transfer, push filters (WHERE clauses) to SQL Server. The Arrow fetch will then only receive rows that satisfy your conditions, further reducing memory and CPU usage.

- Check driver version. Arrow support is relatively new. Ensure you have

mssql-python >= 1.2.0. Runpip show mssql-pythonto verify. - Experiment with temporal types. The biggest speedup often comes from datetime columns, where Python's per-value conversion is eliminated entirely. Benchmark your own queries to see the difference.

- Use the Arrow C Data Interface for cross-language workflows. If you work in a polyglot environment (C++, Python, R), Arrow’s zero-copy ABI allows direct data exchange without serialization—the same pointer can be consumed by any language that supports the Arrow C Data Interface.

By following these steps, you can unlock the full potential of Apache Arrow in your SQL Server data pipelines—faster fetches, lower memory usage, and seamless interoperability with modern DataFrame libraries.

Related Articles

- Choosing the Right Regularizer: A Data-Driven Framework from 134,400 Simulations

- Python Developers Urged to Switch to Deque for Real-Time Data Streaming

- Building an Interactive Conference Assistant with .NET’s AI Toolkit: Q&A

- Scenario Models Refuse to Forecast, Outperform Traditional Polls in English Local Elections Analysis

- How a Self-Healing Layer Eliminates RAG Hallucinations in Real Time

- Navigating High Uncertainty: A Step-by-Step Guide to Scenario Modelling for Local Elections

- Navigating Python's Tricky Corners: A Guide to Standalone Apps, SQLite Backups, and Air-Gapped Installations

- 10 Key Building Blocks for .NET AI Apps: Inside the ConferencePulse Assistant